Fiber optic strands have a central core of material with a high refractive index surrounded by a jacket of material with a slightly lower index. The ratio of the two is set to cause total internal reflection, where the light is confined to the central region and won't diffuse out into the cladding.

Fiber optic strands have a central core of material with a high refractive index surrounded by a jacket of material with a slightly lower index. The ratio of the two is set to cause total internal reflection, where the light is confined to the central region and won't diffuse out into the cladding.

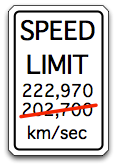

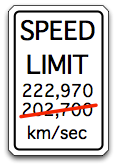

The refractive index is a measure of the speed of light in a medium. The speed of light in vacuum is 300,000 kilometers per second, which is defined as an index of 1. The core of a typical fiber optic cable has an index of 1.48, so the speed of light there is (300,000/1.48) = 202,700 kilometers per second.

Impact

It is roughly 8,200 kilometers from Tokyo to San Francisco.

The round trip time through transpacific fibers due solely to speed of light is roughly (2 * 8,200 km / 202,700 km/sec) = 81 milliseconds. Fibers do not run directly from the San Francisco Bay to the Tokyo harbor, so the actual distance is somewhat longer. Traceroute across the NTT network shows the round trip across the ocean is about 100 msec. A small portion of this is FIFO delay in regenerators along the ocean floor and queueing delay in switches at either end. Another portion is software overhead, as traceroute is handled in the slowpath of typical routers. The rest is the time it takes for light to propagate across the span.

7 ae-7.r20.snjsca04.us.bb.gin.ntt.net (129.250.5.52) 50.115 ms

ae-8.r21.snjsca04.us.bb.gin.ntt.net (129.250.5.56) 51.020 ms

ae-7.r20.snjsca04.us.bb.gin.ntt.net (129.250.5.52) 50.165 ms

8 as-0.r21.tokyjp01.jp.bb.gin.ntt.net (129.250.5.82) 154.821 ms

as-2.r20.tokyjp01.jp.bb.gin.ntt.net (129.250.2.35) 147.516 ms 153.187 ms

Suggestion

100 Gigabit Ethernet is nearly done, with products already available on the market. Research into technologies for Terabit links is ramping up now, including one at UCSB which triggered this musing. Dan Blumenthal, a UCSB professor involved in the effort, said that new materials for the fiber optics might be considered: "We won't start out with that, but it'll move in that direction," (quoting from Light Reading).

Fiber with a 10% lower refractive index would increase the speed of light in the medium by 10%. It would decrease the round trip time across the Pacific from ~100 msec to ~90 msec. One of my favorite Star Trek lines is from Déjà Q, a casual suggestion to "Change the gravitational constant of the universe." This is a case where we can make the web faster by changing the speed of light, though we need only do so within fiber optic cables and not the entire universe.

Practicalities

I admit that I have absolutely no understanding of the chemistry involved in fiber optics. Silica is doped with compounds to get the desired properties, including some which raise or lower the refractive index. There are tradeoffs between clarity/lossiness, dispersion, and refractive index which I don't understand. However I think its important to properly weigh the value of lowering the refractive index: it makes the web faster. We can do a lot with caching content locally and distributing datacenters around the planet, but in the end sometimes bits need to go off to find the original source no matter where it might be.

Also to state it clearly this consideration is only applicable to long range lasers, with a reach in tens of kilometers. The initial Terabit Ethernet work will almost certainly be on short range optics for use within facilities, where the propagation delay is insignificant compared to other delays in the system. Its more important to optimize the power consumption and cost of short range lasers than to worry about microseconds of delay. Long reach optics have different constraints, and there we have a once-in-a-generation opportunity to make wide area networks faster.

Fiber optic strands have a central core of material with a high refractive index surrounded by a jacket of material with a slightly lower index. The ratio of the two is set to cause total internal reflection, where the light is confined to the central region and won't diffuse out into the cladding.

Fiber optic strands have a central core of material with a high refractive index surrounded by a jacket of material with a slightly lower index. The ratio of the two is set to cause total internal reflection, where the light is confined to the central region and won't diffuse out into the cladding.

As it was located near the center of the US auto industry, there was an extensive automotive program at the University of Michigan (Ann Arbor) with an assortment of guest speakers from the Big Three. I went to several presentations that made quite an impression. One of them was about self-driving cars... in 1991.

As it was located near the center of the US auto industry, there was an extensive automotive program at the University of Michigan (Ann Arbor) with an assortment of guest speakers from the Big Three. I went to several presentations that made quite an impression. One of them was about self-driving cars... in 1991.